I have started to think about LLMs and agents less like tools and more like colleagues.

Not colleagues in the human sense, obviously. More like a new layer in how work gets done. A layer that sits between intent and execution. You describe what you want, you get a draft back, you steer it, you verify it, and you decide what ships. That loop is showing up everywhere, but it showed up first in a place where the feedback is immediate and unforgiving.

Software.

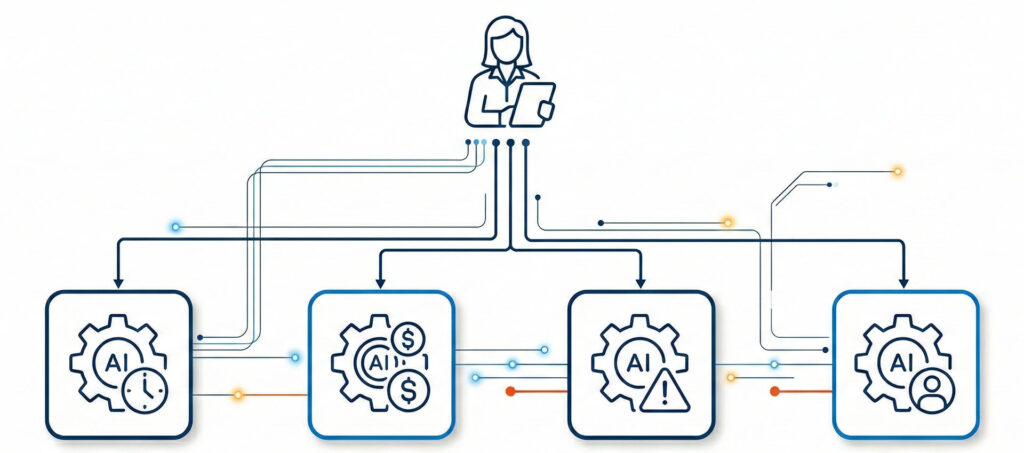

Humans as orchestra leaders, agents as specialists

The mental model that keeps making sense to me is simple: humans become orchestra leaders and agent managers.

The point is not that AI “replaces” the work. The point is that AI becomes an abstraction layer over the work function. Like a manager with a small team of specialists, you can pull in on demand.

In that setup, the human role does not disappear. It gets sharper.

Humans own the:

- Goal and the tradeoffs

- Context that is not written down

- Judgment call when the right answer is not obvious

- Quality bar and what counts as “done”

- Accountability when something goes wrong

Agents do best when they are given a bounded charter. They draft, summarize, transform, generate options, and help you move faster through the messy middle. They can be remarkably good at “first pass” work, especially when the input is unstructured and the output needs to be structured.

The winning pattern is not autonomy. It is managed delegation.

Why I do not buy full autonomy end-to-end

I am optimistic about the upside, but I am not convinced by the “set it and forget it” story.

Today’s LLMs can be brilliant and also inconsistent. They can produce something that looks complete while missing an assumption you would never accept. They can be confident and wrong. They can lose the plot when the work spans multiple systems, multiple stakeholders, and multiple constraints over time.

This is not a fundamental failing. It is just the current state of the technology.

End-to-end orchestration requires a stable world model, durable memory of constraints, and an ability to reason reliably about real consequences. In many real jobs, that bar is higher than people admit. Especially where money moves, contracts exist, or regulatory and audit requirements apply.

So, the stance I keep coming back to is this: agents should operate inside guardrails, under human management, with verification built into the workflow.

That is not a compromise. It is how you make this useful.

Vibe coding is the first breakout example

Vibe coding is the first place I have seen this new abstraction feel undeniably real.

The programmer is still responsible for the result, but the programmer’s role shifts. Less time typing. More time directing. More time reviewing. More time shaping. More time testing. More time integrating.

The loop becomes something like:

- State the intent

- Get a draft implementation

- Critique it like a peer review

- Refine with tighter instructions

- Verify with tests and real execution

- Integrate into the larger system

Some people hear vibe coding and assume it means “no skill required.” I think that is backwards.

The skill moves. You trade raw production for judgment, system awareness, debugging instincts, and taste. You become less of a typist and more of a director and editor.

And once you see it in software, it is hard not to see it everywhere else.

My brother at CBS and the “AI writing” stigma

I had a conversation with my brother, who is a tenured professor at Copenhagen Business School. He was quizzing me about vibe coding and what it implies for programmers.

The interesting part is what happened next.

I told him, half joking and half serious, that he should expect his own work to be reshaped by the same orchestration model. Not because “AI will write papers instead of professors,” but because drafting, structuring, outlining, rewriting, and exploring alternatives are exactly the kinds of tasks that fit the agent-as-team-member pattern.

His first reaction was not technical. It was cultural.

He pushed back on the stigma. The “AI writing” label carries the assumption that using assistance is somehow illegitimate. That it is cheating rather than a workflow. He argued that if we want this to become normal, the stigma has to change.

I think he is right, and I also think the way stigma changes is not by pretending the tools do not matter. It changes when we redefine what we actually value.

The norms will shift from “Did you use AI?” to “Can you defend the work?”

For most knowledge work, the real question is not whether you used assistance. The real question is whether the work holds up.

Can you defend your claims. Can you point to sources. Can you explain why you made the choices you made. Can you show your assumptions. Can you validate that the output is consistent with reality, policy, or evidence.

In other words, the credibility stack changes.

Disclosure norms will vary by field. Academic contexts will have different expectations than marketing contexts or product contexts. But the direction feels predictable: the focus moves away from policing the presence of AI and toward policing the integrity of outcomes.

If you are accountable for the result, then using a drafting assistant should not be the scandal. Shipping something you cannot defend should be.

Marketing is already becoming an agent team sport

Around the same time, I had a conversation with our marketing lead. I was trying to make the “agent team” framing concrete.

Instead of thinking about a single general-purpose assistant, we talked about a small team of specialists that map to real tasks.

One agent drafts a landing page. Another repurposes it into a podcast outline. Another turns the podcast into social content. Another checks brand voice and consistency. Another produces variations for different audiences.

The human does not vanish. The human becomes editor-in-chief and release manager. The person who holds the narrative, chooses what matters, and decides what goes public.

Again, the pattern is delegation.

Not abdication.

In enterprise work, the guardrails are the product

My day job lives in enterprise project execution software, where governance is not optional. Approvals matter. Audit trails matter. Systems of record matter. What counts as “official” is defined by roles, workflows, and policies.

That context makes the human-led model even more obvious.

The agents we are developing are designed to behave like managed team members aligned to real roles. They can draft and propose. They can translate unstructured inputs into structured objects. They can explain variance, summarize what changed, and suggest actions.

But they do not own the baseline. They do not silently rewrite project plans. They do not bypass approvals.

In enterprise settings, autonomy is not the goal. Trust is the goal, and trust comes from traceability, review, and clear responsibility.

The most useful form of “agentic” work is often the boring kind. Preparing a clean change request package. Turning messy notes into a structured list of project risks and action items. Producing an audit-ready status narrative that maps to the data.

That is what actually saves time.

Delegation feels strange, and agents amplify that feeling

There is a management lesson hiding in all of this: delegation always feels weird at first.

Anyone who has been a new manager recognizes the pattern. You know how to do the work. You could do it faster yourself. You worry it will not match your standards. You worry you will lose control. So you hover. You micromanage. Or you swing the other way and delegate too much and get burned.

Managing agents triggers the same psychology.

If you treat an agent like a vending machine, you will be disappointed. If you treat it like a fully autonomous employee, you will also be disappointed.

The skill is the middle path: clear intent, clear acceptance criteria, tight feedback loops, and review discipline.

Good delegation is a system:

- Define the outcome

- Define what “good” looks like

- Constrain the agent’s scope

- Require citations or traceability where needed

- Review and refine until it meets the bar

- Build reusable patterns so the process gets faster over time

If there is a “career skill” buried in this shift, it is that. The ability to run a clean loop.

The distraction risk: building “agents for everything” instead of building lasting capability

There is a pattern I keep seeing as the agent conversation heats up. The push is relentless: ship agents fast, attach them to as many use cases as possible, and prove momentum.

The problem is that under that pressure, people default to familiar software patterns. They build agents that do what traditional software already does better: deterministic workflows, simple rule evaluation, CRUD operations, routing, and basic automation. You end up with an “agent layer” acting like a noisy wrapper around functions that should stay predictable.

That is not just inefficient. It pulls attention away from the harder work, which is identifying the places where agents are uniquely valuable.

The durable use cases tend to be the ones that sit between messy human reality and structured systems. Translating unstructured inputs into governed objects. Summarizing complex situations into defensible narratives. Surfacing drivers, options, and tradeoffs across multiple constraints. Packaging decisions so the right humans can approve them quickly. Those are not flashy demos, but they compound over time.

If you want a lasting initiative, the goal should not be “more agents.” The goal should be “better leverage.” Fewer agents, tighter charters, strong guardrails, and workflows designed around accountability.

The question guiding my own experiments

In my own work, I keep coming back to a simple question:

If I treat agents like team members under human management, what parts of project execution get materially better?

That is what we are exploring in our project domain. Not a fantasy of autonomous project delivery, but a human-led operating model where role-based agents support the work.

A project manager agent that turns live telemetry into a defensible weekly narrative and flags decisions that need attention. A scheduler agent that watches dependencies and critical path movement and proposes recovery scenarios. A controls agent that explains variance drivers and drafts forecast assumptions. A change control agent that turns messy scope conversations into structured change requests and impact packets. A risk agent that curates RAIDO hygiene and highlights leading indicators. Each one is scoped, reviewable, and aligned to how real teams already operate.

So, my personal experiment is not “where can an agent replace a person?” It is “where can an agent make a good person faster, more consistent, and less buried in admin?”

And I ask myself the management question too: where am I hesitating because delegation feels like loss of control?

That is the shift I think more of us are going to have to learn. Not just how to use AI, but how to manage it.

— 0 —

I wrote this blog in collaboration with my research and blog agents, using the same human-led orchestration model I describe above. It started as a rough internal prompt where I was trying to articulate how I see agents fitting into enterprise work: humans stay accountable as the “orchestra leaders,” while role-specific agents act like managed specialists. From there, I had the agents help me pressure-test the framing, turn it into a clear outline, and draft the post in my voice. I then edited it the way I would edit any teammate’s work: tightening sections, adjusting emphasis, and making sure the argument was coherent and defensible. The end result is not “AI wrote this for me.” It is a practical example of delegation, iteration, and review, with me owning the final call on what ships.